The Spark That Changed Everything: How Electricity Electrified Human Civilization

Flip a switch, and light appears. Press a button, and your room cools down. Tap a screen, and the world’s knowledge sits at your fingertips. We take electricity so completely for granted that we barely notice it—until a power outage reminds us how dependent we have become. Yet this invisible force that powers our modern world is a remarkably recent invention. The story of electricity is the story of human curiosity, brilliant accidents, cutthroat competition, and the most profound transformation of daily life in history.

The Mystery Before the Mastery

For most of human history, electricity remained a mysterious curiosity. Ancient Greeks noticed that rubbing amber (they called it elektron) attracted lightweight objects. The Romans observed electric fish delivering surprising shocks. But no one connected these phenomena, and certainly no one imagined they could be harnessed for practical use.

For centuries, the primary sources of light and heat remained fire, candles, and oil lamps. The rhythms of daily life were dictated by the sun. People rose at dawn and retired at dusk. Nighttime activities were limited to those who could afford expensive candles or oil. The very concept of extending productive hours beyond daylight was a luxury few could imagine.

Then came the Age of Enlightenment, and with it, scientists began systematically exploring electrical phenomena. In the 1740s, Benjamin Franklin’s famous kite experiment demonstrated that lightning was electrical in nature. Stephen Gray discovered that some materials conduct electricity while others insulate. These were crucial insights, but electricity remained a laboratory curiosity—fascinating but seemingly useless.

The Battery: Electricity in a Jar

Everything changed in 1800, when Alessandro Volta, an Italian physicist, invented the first true battery. By stacking alternating discs of zinc and copper, separated by brine-soaked cloth, Volta created a continuous flow of electricity. This was revolutionary. For the first time, scientists could study electricity without relying on weather conditions or static friction. They had a reliable source of electrical current.

Volta’s invention opened floodgates of experimentation. Within two decades, Humphry Davy used batteries to isolate previously unknown chemical elements by passing electric current through compounds. Michael Faraday, a self-taught English scientist, discovered electromagnetic induction in 1831—the principle that a changing magnetic field produces an electric current. This insight would become the foundation for practically all electrical power generation used today.

The Birth of Electric Light

While scientists explored electrical theory, practical minds dreamed of electric light. Arc lamps, which created light by maintaining an electrical spark between two carbon rods, appeared in the early 1800s. They were impressively bright but impractical for indoor use—the light was harsh, the equipment noisy, and the rods burned away quickly.

The race to create a practical incandescent bulb became one of history’s most famous technological competitions. Inventors across Europe and America experimented with different filament materials, vacuum techniques, and bulb designs. The challenge was immense: find a material that glowed when heated by electricity but did not quickly burn away.

Joseph Swan in Britain demonstrated a working incandescent lamp in 1878. Thomas Edison, working independently in America, patented his version in 1879. Edison’s contribution was not merely the bulb itself but an entire system: generators, wiring, switches, and meters. He did not just invent a light; he invented an industry.

The War of Currents

As electric power systems expanded, a bitter conflict emerged between two competing visions. Edison championed direct current (DC), where electricity flows in one direction. His former employee Nikola Tesla, backed by industrialist George Westinghouse, advocated alternating current (AC), where the direction of flow reverses periodically.

The technical differences mattered enormously. DC power could not easily be transmitted over long distances—it lost too much energy as heat in the wires. This meant power plants needed to be close to consumers, limiting the reach of electrical service. AC power, however, could be transformed to high voltages for efficient long-distance transmission, then stepped back down to safe levels for home use.

The conflict turned ugly. Edison, desperate to protect his investments, launched a propaganda campaign portraying AC as dangerously lethal. He arranged public electrocutions of animals to demonstrate AC’s hazards. Despite these efforts, AC’s technical advantages prevailed. The 1893 World’s Columbian Exposition in Chicago featured a stunning AC-powered light display that captivated millions. Later that year, AC won the contract to harness Niagara Falls for electrical generation. The war of currents was over. AC had won, and the modern electrical grid was born.

Electrifying the World

The early electrical grid was an urban phenomenon. Cities lit up first—streetlights replaced gas lamps, factories installed electric motors, wealthy homes added electric lighting. Rural areas remained dark. Running power lines to scattered farms made little economic sense to utility companies.

In the United States, this changed dramatically with the Rural Electrification Administration, created in 1935 as part of President Franklin Roosevelt’s New Deal. Government loans enabled the formation of rural electric cooperatives that brought power to remote farms. The percentage of American farms with electricity jumped from about 10 percent in 1935 to nearly 100 percent by the 1950s. Similar programs, with varying speeds, occurred worldwide.

Electrification transformed rural life fundamentally. Farmers gained electric pumps for water, milking machines for dairy operations, and refrigeration for storing produce. Housewives—still predominantly women in this era—saw their workloads decrease dramatically with electric washing machines, irons, and vacuum cleaners. The electrical revolution was also a domestic revolution.

The Appliance Revolution

Once electricity entered homes, inventors created an avalanche of appliances to consume it. The electric iron appeared in the 1880s, an immediate improvement over heavy flatirons that required constant reheating on stoves. Electric fans provided relief from summer heat. The electric toaster, invented in 1893, eliminated the need to hold bread over an open flame.

Refrigeration represented perhaps the most transformative appliance. Before electric refrigerators, families relied on ice boxes, requiring regular deliveries of ice blocks. Food spoiled quickly. Electric refrigerators, becoming affordable in the 1920s and 1930s, changed shopping habits entirely. Families could buy food in larger quantities, store leftovers safely, and enjoy fresh produce year-round.

Radio brought entertainment and news into living rooms. Television, becoming widespread in the 1950s, created a shared cultural experience across nations. Air conditioning, initially a commercial and industrial technology, transformed the American South and Southwest, making previously unbearable climates comfortable for millions.

Each new appliance created new expectations. A home without a refrigerator became unthinkable. A business without air conditioning lost employees to competitors. Electricity had shifted from a luxury to a necessity, and the definition of basic living standards had permanently changed.

Electronics and the Digital Age

The story of electricity did not end with appliances. The invention of the transistor in 1947 at Bell Laboratories opened an entirely new chapter. Transistors could amplify and switch electrical signals with no moving parts. They were smaller, more reliable, and consumed far less power than the vacuum tubes they replaced.

Transistors led to integrated circuits, then microprocessors, then the entire digital revolution. Computers shrank from room-sized machines to devices that fit in pockets. The internet emerged, connecting electrical devices across the globe. Smartphones, themselves electrical devices, became control centers for other electrical devices—thermostats, lights, security systems, and cars.

Today, the average home contains dozens of electrical devices that would have seemed magical to earlier generations. We carry powerful computers in our pockets. We work remotely, attend virtual meetings, and stream entertainment—all dependent on reliable electrical power and the networks it enables.

Political and Economic Transformation

Electricity reshaped political and economic structures profoundly. Industrial production, once limited to areas near water power or coal deposits, became geographically flexible. Manufacturing could locate wherever labor and transportation were favorable, not necessarily near primary energy sources.

Nations recognized that electrification was essential for economic development. Soviet planners made electrification a central goal—Lenin famously declared that Communism equaled Soviet power plus electrification. Newly independent nations after World War II prioritized electrical infrastructure as a prerequisite for industrialization.

Control over electrical infrastructure became a source of political power. Electric utilities were often state-owned monopolies or heavily regulated private companies. Blackouts could topple governments. Brownouts sparked protests. The reliability of electrical supply became a metric of governance quality.

The energy crises of the 1970s, when oil prices skyrocketed, exposed vulnerabilities in electrical systems dependent on fossil fuels. Nations began diversifying their electrical generation, building nuclear plants, and eventually investing in renewable sources like wind and solar. The politics of electricity became intertwined with the politics of energy security and environmental protection.

Lifestyle Revolution

Consider how electricity changed daily rhythms. Before electrification, work schedules followed the sun. After electrification, factories could operate continuously, and shifts became possible. The night shift was born. Entertainment became available on demand—radio programs, television shows, recorded music. Social life no longer needed to conclude at sunset.

Electric lighting extended working hours but also created new forms of leisure. Movie theaters, restaurants, and nightclubs flourished under electric lights. Sports events could be held at night. The very concept of nightlife as we know it exists because of electricity.

Electricity also enabled urbanization at an unprecedented scale. Tall buildings require electric elevators. Dense populations need electric water pumps and sewage systems. Modern cities, with their skylines lit against the night, are impossible without electrical infrastructure.

The always-on nature of electrical devices has created new challenges. Artificial light disrupts sleep patterns. Screens compete for attention with work and relationships. The boundaries between work and personal life have blurred as email and messaging make workers accessible at any hour. Electricity solved old problems but created new ones.

Environmental Consequences

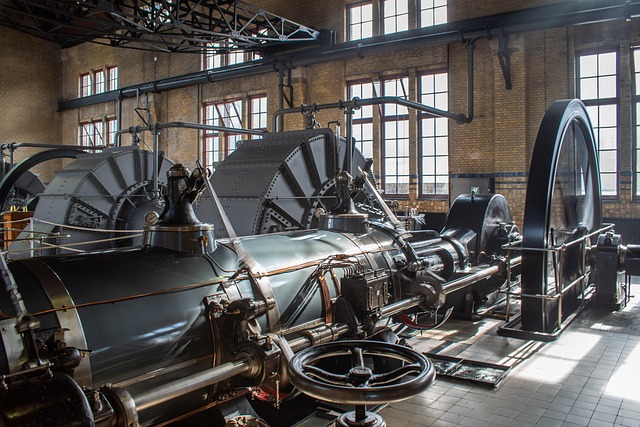

The environmental impact of electrical generation is one of the defining challenges of our era. For most of electrical history, the primary generation method involved burning fossil fuels—coal, natural gas, and oil—to produce steam that drives turbines. The carbon dioxide released contributes directly to climate change.

Coal-fired power plants, in particular, have been massive sources of air pollution. Sulfur dioxide emissions caused acid rain. Particulate matter damaged respiratory health. Mercury contamination spread through food chains. The environmental costs of cheap electricity were externalized—borne by society rather than reflected in electricity prices.

Hydroelectric dams, while producing clean electricity, disrupted river ecosystems, flooded valleys, and displaced communities. Nuclear power, despite producing minimal carbon emissions, generated radioactive waste that remains hazardous for thousands of years. The 1986 Chernobyl disaster and 2011 Fukushima disaster demonstrated the catastrophic potential of nuclear accidents.

Only recently have renewable sources like wind and solar become cost-competitive. Their environmental footprint is dramatically lower, though not zero—manufacturing solar panels and wind turbines requires mining and industrial processes. The transition to cleaner electricity generation is underway but incomplete. How quickly it proceeds will shape the climate future we leave to coming generations.

The Future of Electrification

As we look forward, electricity’s role continues to expand. Electric vehicles are replacing internal combustion engines, potentially reducing transportation emissions—if the electricity is generated cleanly. Heat pumps are replacing gas furnaces, electrifying home heating. Smart grids are making electrical systems more efficient by matching supply and demand in real time.

Yet billions of people still lack reliable access to electricity. Energy poverty remains a global challenge. Extending electrical infrastructure to underserved regions—ideally using clean generation methods—represents both a moral imperative and an economic opportunity.

The story of electricity is far from over. What began with ancient Greeks rubbing amber has become the circulatory system of modern civilization. Every aspect of contemporary life—communication, transportation, healthcare, entertainment, food production—depends on the reliable flow of electrons through wires and circuits.

We have come a long way from candlelit evenings and sun-determined schedules. And as we face the challenges of climate change and extend electrical access to all of humanity, we are still writing the story of how humans harnessed one of nature’s fundamental forces and changed everything.